In-Depth

Virtualization’s Impact Beyond Operations

The virtualization environment requires new control policies, processes, and standards, and as these come into place they will require new audit process and procedures.

by David M Lynch

The adoption of virtualization technology has grown rapidly over the past few years, and for good reason. Although server virtualization helps organizations cut costs, better utilize assets, and improve responsiveness, don’t expect this area of the data center to operate in the same way as the physical side. The lack of management tools, reporting, and automation in the virtual space result in a manual environment that does not integrate well into existing data center compliance and control models. All may seem to be under control, but costs, risks, and data center incidents could all be escalating under the covers.

Although there are many commonalities between physical server and virtual server environments, there are also significant differences that impact management and control systems. For example, every data center has specific processes and procedures for provisioning new servers. These generally involve sign-offs by several teams, eventually resulting in a new server being installed in the data center. When you can create a new virtual machine, literally with the click of a mouse, these existing processes can be easily compromised.

Monitoring, as well as identity and mobility control, can also be a challenge. When you can make 30 identical copies of a specific server, it can be a challenge to keep track of them. This problem is compounded because VMs are, by definition, mobile. They can (and do) move around the environment which makes them more difficult to trace and manage. They can also compromise data and application segmentation policies.

The uniqueness of the virtual space makes it difficult for the existing data center management tools to operate here, leaving potential blind spots. Thus, your traditional “red flag” or “alert” systems may not be working properly in this space, and are either being circumvented or simply not part of day-to-day operations, potentially leaving you exposed.

This is not much a significant an issue when the VM environment is small, but the impact of this technology on business controls needs to be considered as the environment grows.

Impact on Business Controls

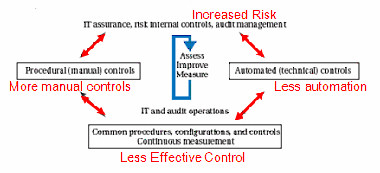

Figure 1 (below) is an example of an integrated IT governance, risk, and compliance (GRC) model, which is a useful starting point for understanding the impact of virtualization.

Figure 1: Standard GRC Model

As virtual environments grow, the effect of the lack of automated controls and the increased manual activity starts to create an imbalance in terms of IT and audit operations, resulting in less effective control and increased risk.

When environments are small and well contained, the impact of these forces is contained. As the environment grows, costs, risks, and data center incidents will escalate, impacting the overall business performance. An early signal that this is happening is the need for unplanned IT resources caused by inefficient virtual environments. The “tipping point” is different for each organization, but it will be reached eventually. Consequently, as you ramp up your adoption of server virtualization in the data center, then you should be considering its impact on your business controls -- and mitigating it.

Existing Standards and Processes

Start by overlaying existing standards and processes into the virtual space wherever possible. Standards and procedures will probably require tweaking, but ensuring that they are in place will provide needed visibility and control. These include elements common to all servers (physical or virtual), such as configuration and patch management, as well as establishing effective reporting for the virtual side of the data center. (There are a number of free products available that can help, including Embotics V-Scout, PlateSpin Recon Inventory Edition, and Veeam Monitor.)

Provisioning is a specific area to focus on as environments grow. Traditional server provisioning processes can be easily circumvented in the virtual space, so new processes must be established that control both what gets provisioned and who has the authority to authorize new servers.

Data separation is also of concern. Every data center has specific rules about applications separation, usually driven either by security concerns or compliance issues. As you virtualize applications that fall under these standards on the physical side of the house, consider how you will enforce this on the virtual side.

New Standards and Processes

Adapting your traditional processes won’t be enough. The uniqueness of server virtualization also requires new standards and processes, specifically for identity management, VM mobility and reclamation.

Because VMs can be exact copies of each other, you must be able to uniquely identify them. Naming conventions are a common way of doing this, but they need to be well thought out, and like all manual processes, are subject to error and/or misuse.

VMs are, by definition, mobile, whether you use the available load balancing and migration tools or not. As you virtualize applications it is important to incorporate some level of mobility control. A common approach is to separate production environments from non-production environments, DMZs from the rest of the production area, and identify regulatory applicable applications (i.e., Sarbanes Oxley) that must be isolated.

This separation is easy to do when deploying the VM initially, but you must determine how to maintain this level of separation throughout the life of the VM. This can be done by implementing separate VirtualCenters for each separate environment (this can get cumbersome and expensive as the number of separation zones increases) or by using other VM lifecycle management products.

“Offline” is a new power state introduced by virtualization where VMs are powered off but still reside in the environment. Although there can be valid reasons why you would want an offline VM to stay in the environment, this state brings its own challenges it.

When a VM is offline it is generally invisible to the standard configuration and patch management systems in the data center. The longer a VM is offline, the more out of date it is likely to become. Consequently, you need policies that specify what types of VMs can be left offline, as well as the maximum amount of time that this is allowed in the environment.

VMs can have lifecycles ranging from minutes to years, and one of the more important steps to prevent sprawl is to ensure that redundant or unused VMs are removed in a timely fashion. A simple way of doing this is to attach an expiry date to all VMs when they are created, then use the date to regularly purge obsolete VMs, removing clutter and reclaiming the resources it is consuming.

Automate, Automate, Automate

Ultimately, the only way to get your GRC model back in balance is to increase the level of automation in the environments – which, if done right, will have the additional effect of reducing the amount of manual activity and controls. This can be done through RBA-type systems, scripts, or emerging lifecycle management and control systems.

New Audit Standards

As we have discussed, the virtualization environment requires new control policies, processes, and standards, and as these come into place they will require new audit process and procedures. Consider, for example, the impact of virtualization on the software licensing audit. This issue is still unclear as vendors figure out what their virtual licensing policies are, but will become increasingly important as the percentage of virtual servers in the environment increases.

Server virtualization started in most data centers as a tactical effort, lead by IT, and focused on the ROI associated with server consolidation. Moving from tactical server consolidation to more production-oriented uses of virtualization, and increasing the percentage of your data center that is virtual, requires a new level of thinking about the impact of this technology on data center management and business controls, as well as some significant changes to them, before trouble strikes.

David M. Lynch is the vice president of marketing at Embotics Corporation. You can contact the author at [email protected]